Manual delineation of only one image in unseen databases is sufficient for accurate performance in automated multiple sclerosis lesion segmentation

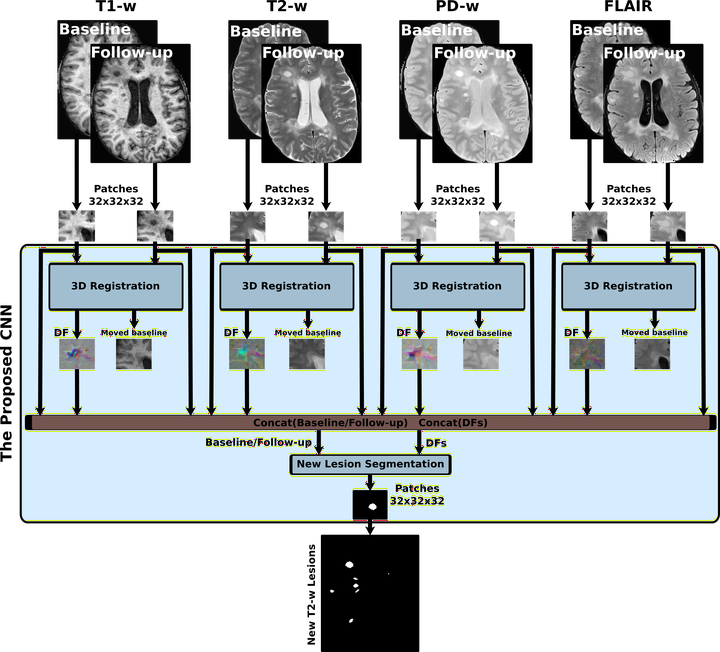

Background: Convolutional Neural Network (CNN) methods are being proposed for automated white matter lesion segmentation increasing the performance of typical state-of-the-art methods. However, their accuracy decreases significantly when using them on other image domains that those used for training, showing lack of adaptability to unseen imaging data and limiting its applicability in non-specialized hospitals. Aim: To analyze the effect of domain adaptation on multiple sclerosis (MS) lesion segmentation, investigating how transferable a CNN model is when applied to other unseen image domains. Methods: An automated lesion segmentation method based on a 11-layer CNN classifier was firstly fully-trained using 35 T1-w and FLAIR scans from the MS lesion segmentation challenges (MICCAI 2008 and 2016). Then, domain adaptation was independently evaluated on two different datasets composed of 60 and 61 T1w and FLAIR images from a clinical hospital and from the public ISBI2015 challenge, respectively. For each unseen dataset, the same source model was fine-tuned re-training only the last layers but using a single image (we tested the use of images with different lesion load). The Dice overlap (DSC) coefficient between the resulting segmentations and manual lesion annotations was compared with respect to the same model when was fully trained on the target domain and with respect to other methods such as LST. Results: On the clinical dataset, the performance of the model fully-trained with data from the target domain was DSC=0.53. When using the source model without readaptation, the performance dropped to DSC=0.25, while when adapting the source model using a single image the performance ranged between DSC=[0.30-0.48] depending on the lesion load of the image used. In all cases, showed a significant increase in the accuracy with respect to LST (DSC=0.29). On the ISBI2015 challenge, our fully-trained CNN method was ranked 3rd among 59 methods, showing human like segmentation performance. Interestingly, adapted models trained with only one image still yielded a remarkably higher performance than other state-of-the-art methods like LST or lesionToads, showing also a very similar performance to other CNN models trained on larger number of images. Conclusions: Domain adaptation allows to use pre-trained CNNs on unseen clinical settings. A manual delineation of the lesions in only one image is sufficient to obtain accurate automated lesion segmentation performances. Disclosure: S. Valverde: nothing to disclose. M. Salem: nothing to disclose. M. Cabezas: nothing to disclose. D. Pareto: has received speaking honoraria fron Novartis and Biogen. J.C. Vilanova: nothing to disclose. Lluís Ramió-Torrentà: has received compensation for consulting services and speaking honoraria from from Biogen, Novartis, Bayer, Merck, Sanofi, Genzyme, Teva Pharmaceutical Industries Ltd, Almirall, Mylan. A. Rovira serves on scientific advisory boards for Biogen Idec, Novartis, Genzyme, and OLEA Medical, and has received speaker honoraria from Bayer, Genzyme, Sanofi-Aventis, Bracco, Merck-Serono, Teva Pharmaceutical Industries Ltd, OLEA Medical, Stendhal, Novartis and Biogen Idec. A. Oliver: nothing to disclose. J. Salvi: nothing to disclose. X. Lladó: nothing to disclose.