The Russian Language Learning Center in Assiut announces the start of a new course at the end of March 2026

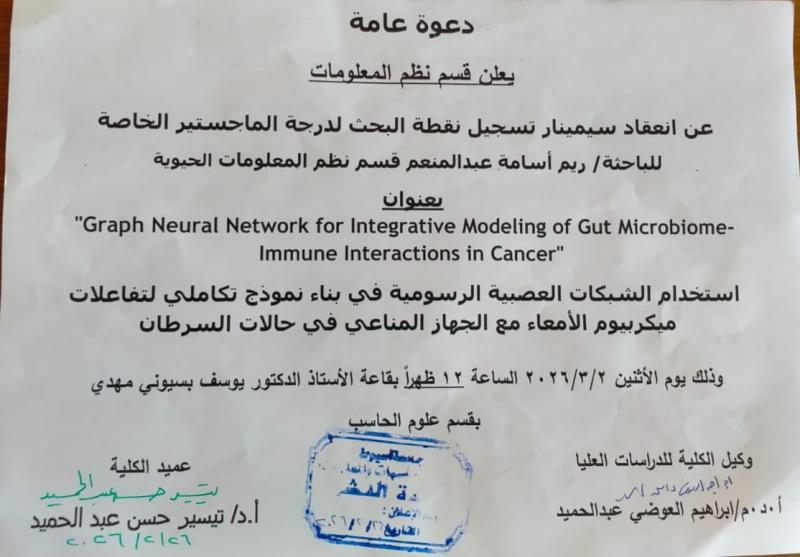

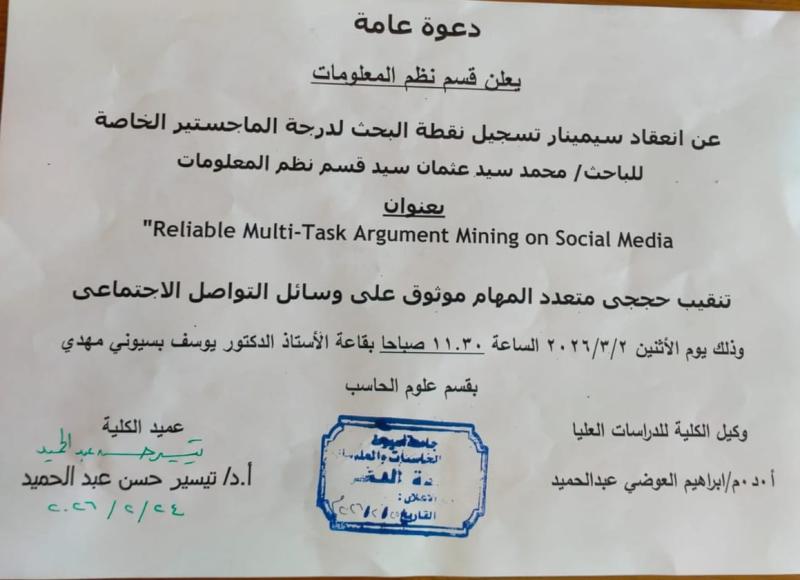

Master's Program Lecture Schedule for the Second Semester of the Academic Year 2025/2026

Master's Program Lecture Schedule for the Second Semester of the Academic Year 2025/2026

news category

Postgraduates

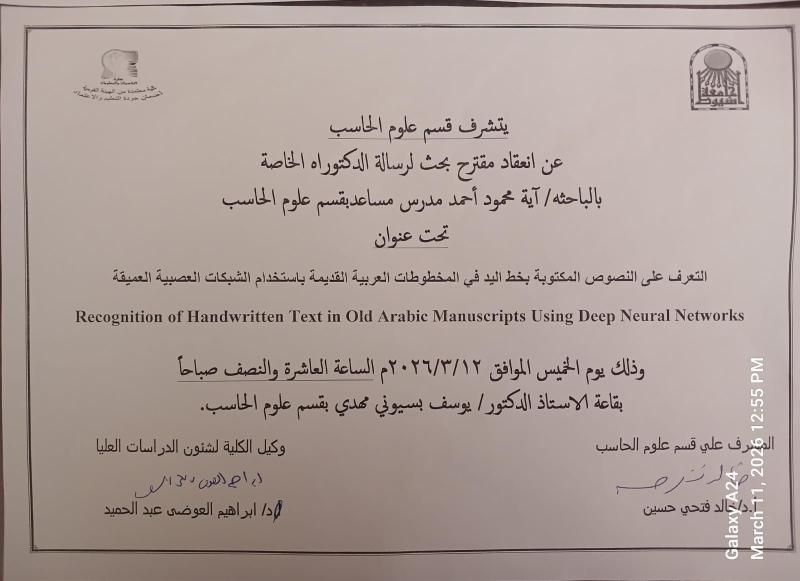

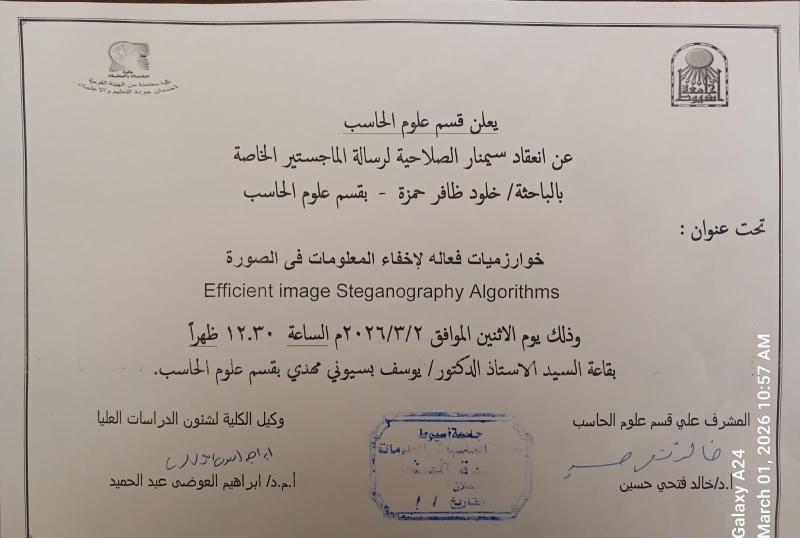

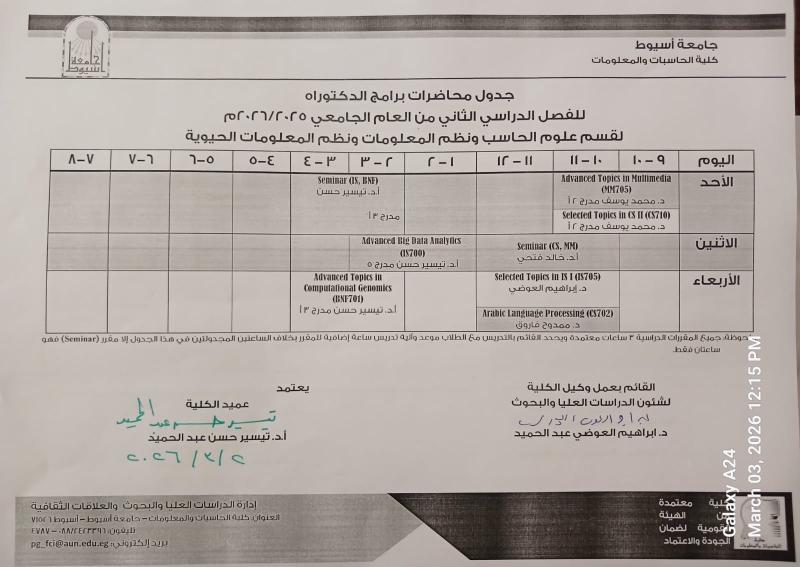

Lecture schedule for PhD programs for the second semester of the academic year 2025/2026 for the Department of Computer Science, Information Systems and Bioinformatics

Lecture schedule for PhD programs for the second semester of the academic year 2025/2026 for the Department of Computer Science, Information Systems and Bioinformatics

news category

Postgraduates

Invitation to discuss the Master's thesis submitted by researcher / Rofida Gamal Mohamed Rabie, teaching assistant in the Information Systems Department

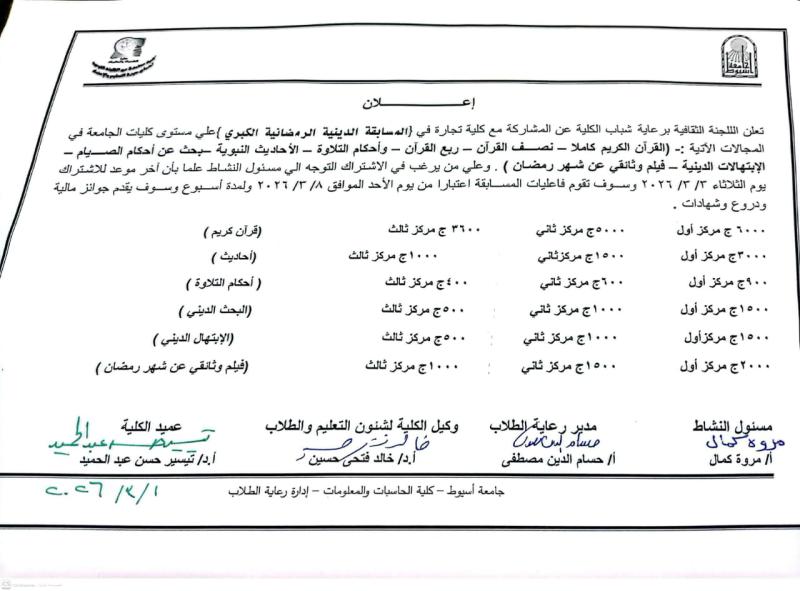

Announcement: The Grand Ramadan Religious Competition

🚨 Under the patronage of: ✨Prof. Dr. Tayseer Hassan Abdel Hamid (Dean of the College)

✨Prof. Dr. Khaled Fathy Hussein (Vice Dean for Education and Student Affairs)

The Cultural Committee of the Student Welfare Department announces the launch of the "Grand Ramadan Religious Competition"