In conjunction with Cybersecurity Awareness Month, the Faculty of Computers and Information at Assiut University organized a seminar entitled "Cybercrimes and Safe Internet Use."

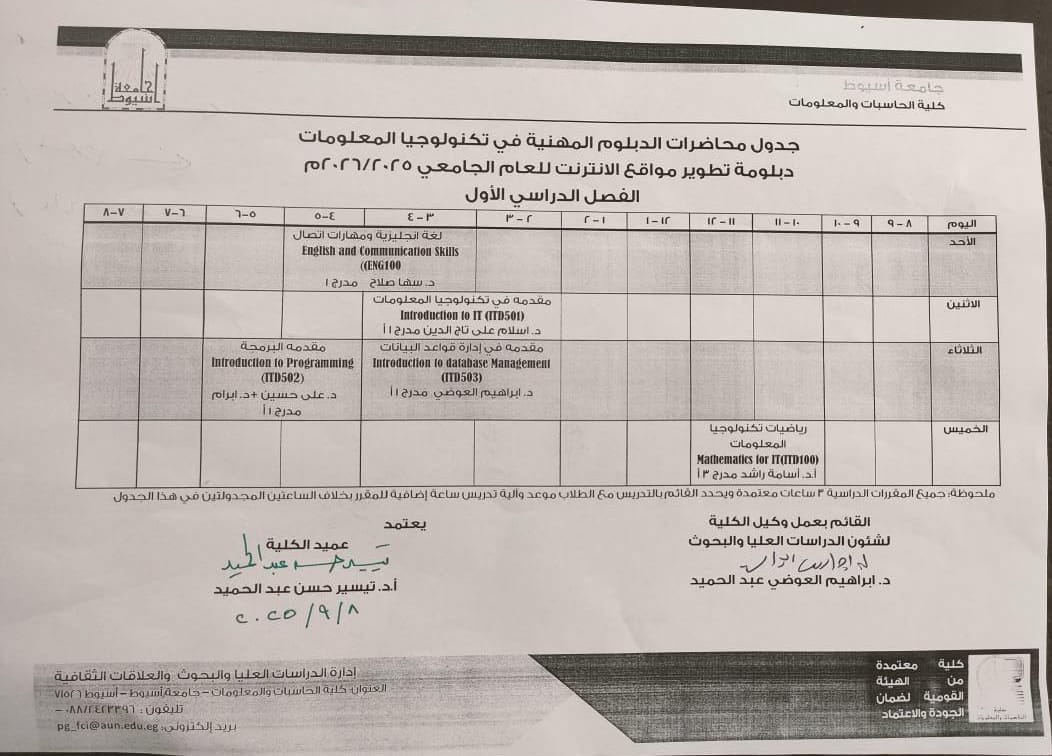

The Faculty of Computers and Information at Assiut University organized an awareness seminar entitled "Cybercrimes and Safe Internet Use," under the auspices of Professor Dr. Ahmed El-Menshawy, President of Assiut University, and under the supervision of Professor Dr. Mahmoud Abdel-Aleem, Vice President of the University for Community Service and Environmental Development, and Professor Dr. Tayseer Hassan Abdel-Hamid, Dean of the Faculty.

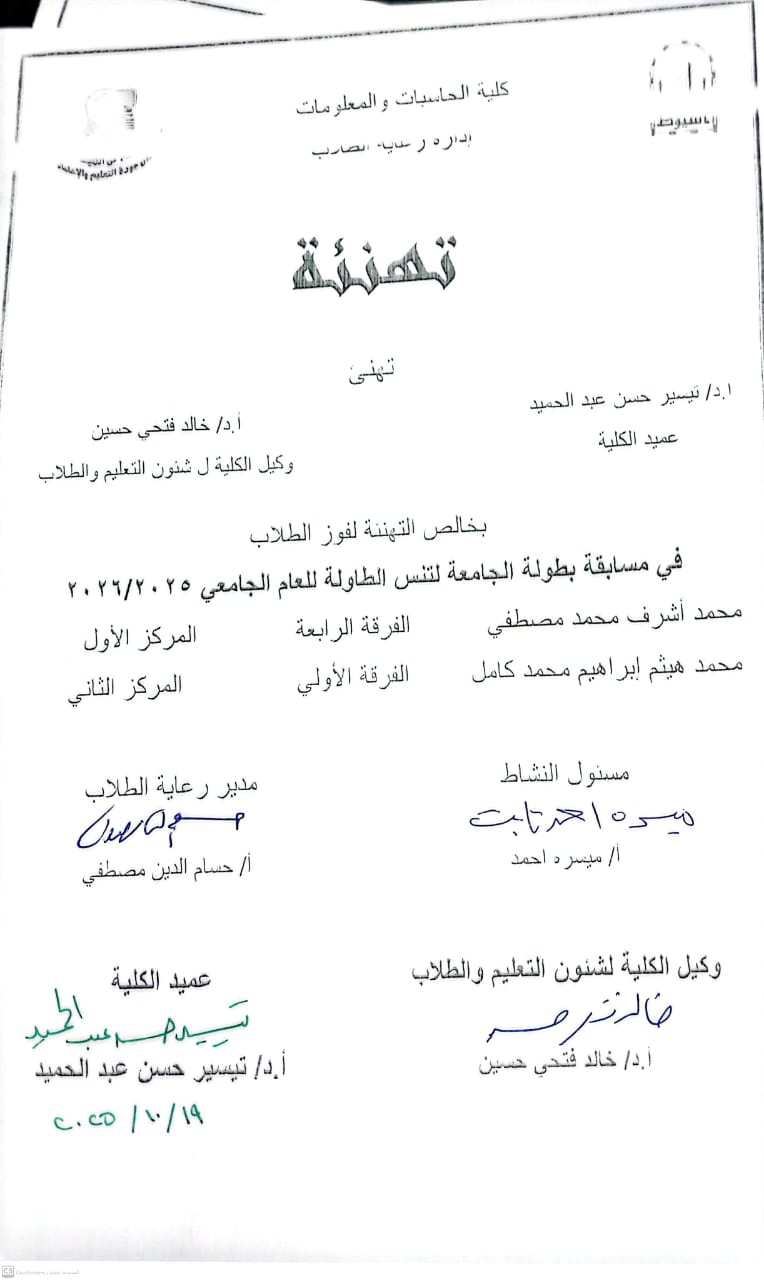

The seminar featured presentations by Dr. Dalia Nashaat, Vice Dean of the Faculty for Community Service and Environmental Development, and Eng. Ayman Ayad, Director of the Information Technology Institute (ITI) Assiut Branch. Professor Dr. Khaled Fathy Hussein, Vice Dean of the Faculty for Education and Student Affairs, also participated, along with a number of faculty members, staff, and students.

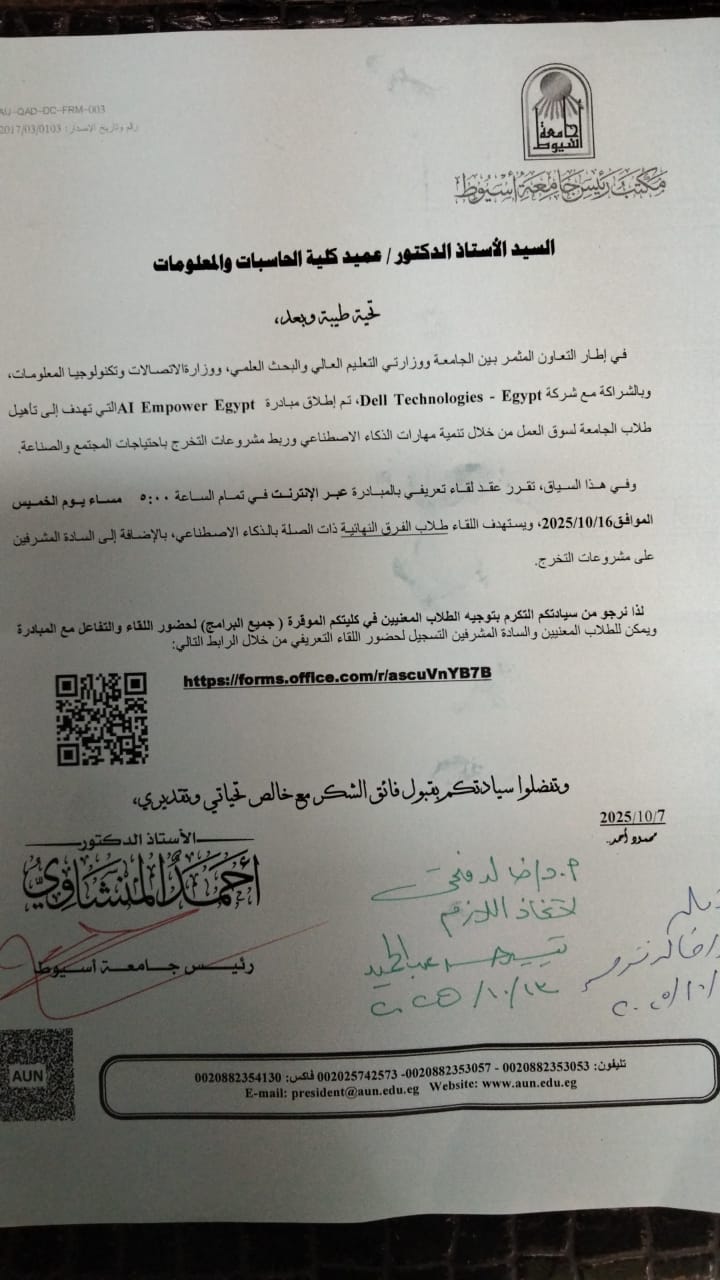

Professor Ahmed El-Menshawy, President of Assiut University, affirmed that the university is increasingly focused on promoting digital literacy among its students and staff, given the rapid advancements in information technology worldwide. He noted that October is an important occasion to celebrate cybersecurity and emphasize the importance of protecting personal and institutional data and information online.

He added that maintaining a secure presence in the digital space is no longer optional, but a necessity for everyone, both in their personal lives and at work. He stressed that adopting secure digital habits, such as securing devices, regularly updating passwords, and being vigilant against cyber threats, contributes to building a more conscious and secure digital society.

Professor El-Menshawy emphasized that Assiut University, through organizing seminars and awareness events, strives to enhance information security among its students and staff and instill the values of digital responsibility as part of a culture of responsible citizenship.

Professor Mahmoud Abdel-Aleem explained that cybercrimes have become among the most common and widespread crimes with the increasing reliance on the internet. He pointed out that this vast digital world is difficult to restrict or control, necessitating raising public awareness of its dangers. He encouraged everyone to benefit from the seminar's activities and the important information it contained.

For her part, Professor Tayseer Hassan Abdel-Hamid emphasized that organizing the seminar coincides with Cybersecurity Awareness Month, which is celebrated globally during October. She explained the college's commitment to organizing a series of events and seminars during this month to promote a culture of digital protection and raise awareness of cybercrimes among students.

During her lecture, Dr. Dalia Nashaat reviewed the concept of cybercrimes, their types, methods of committing them, and their impact on individuals and society. She also highlighted the most prominent challenges facing the digital community in light of the continuous development of the tools used to commit these crimes.

Mr. Engineer Ayman Ayad also gave a presentation on safe methods of using the Internet, and ways to protect electronic accounts and personal information, stressing the vital role that IT specialists play in protecting the digital space and confronting cyberattacks.