Congratulations from Professor Dr. Tayseer Hassan Abdel Hamid to researcher Mahmoud Qutb Abdo Diab on winning the award for best research paper.

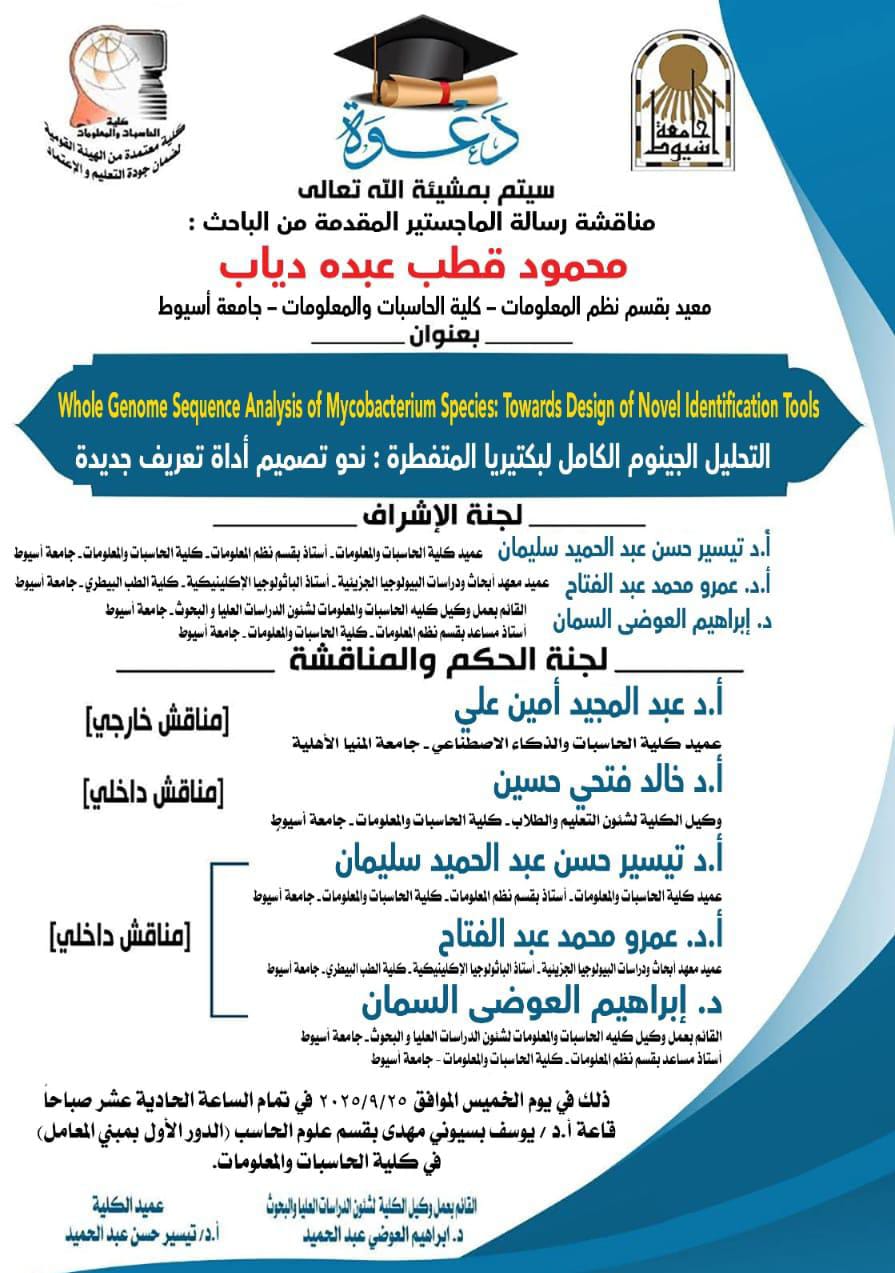

Invitation to attend the discussion of the Master's thesis submitted by researcher Mahmoud Qutb Abdo Diab

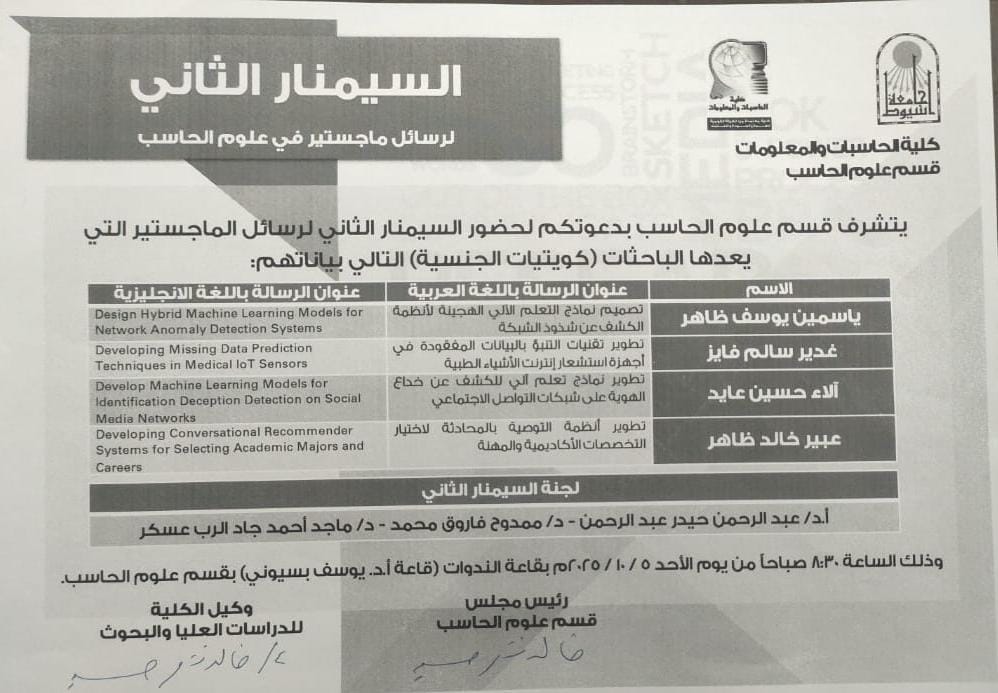

Invitation to attend the second seminar for Master's theses in the Computer Science Department

news category

Postgraduates

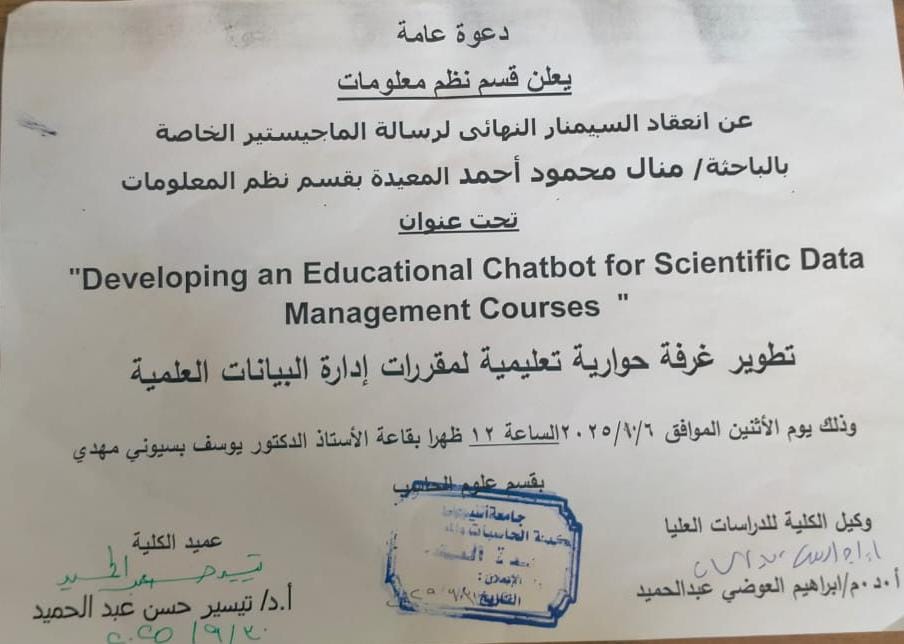

Invitation to the final seminar for the Master's thesis of researcher/Manal Mahmoud Ahmed, teaching assistant in the Information Systems Department

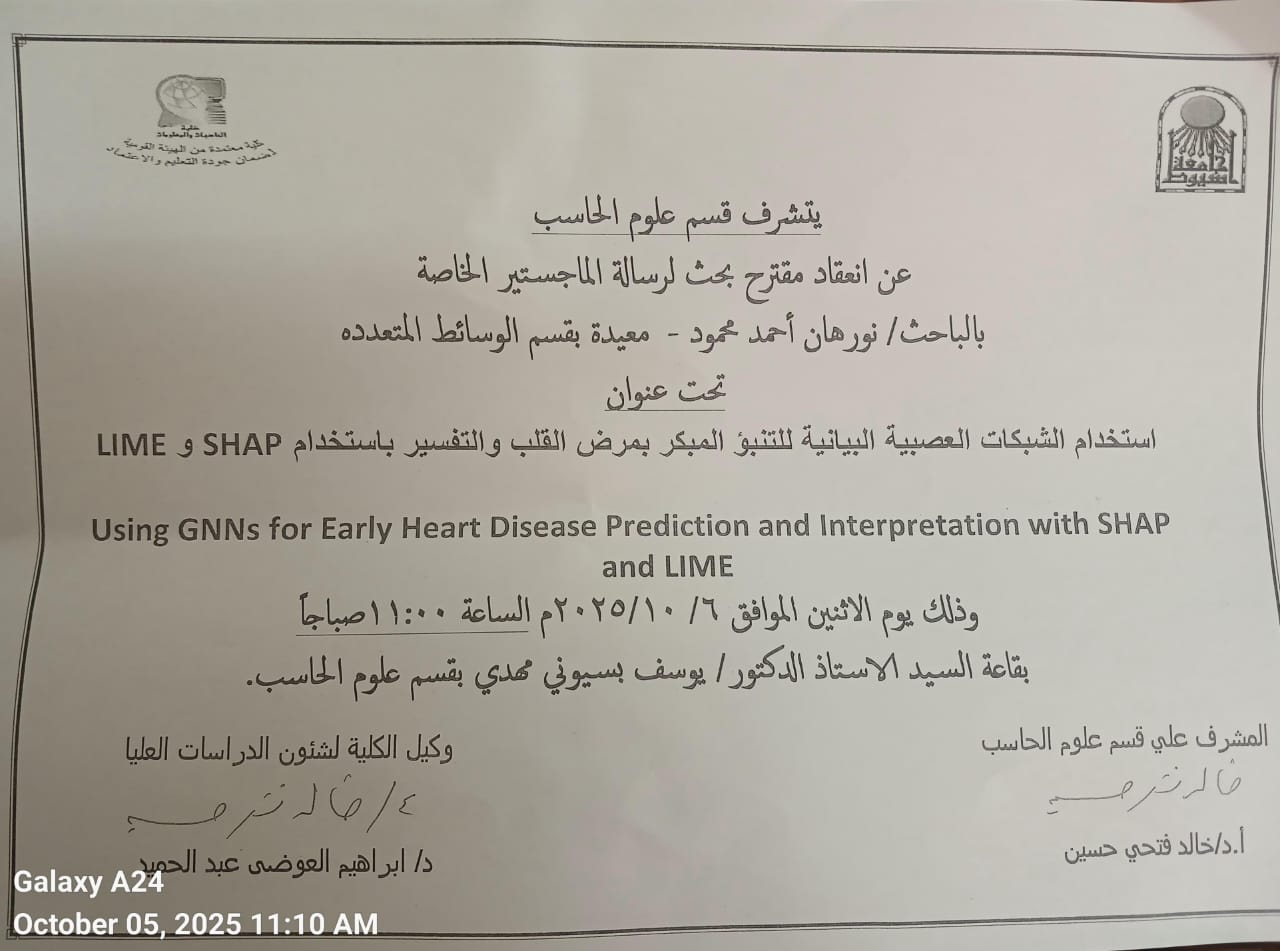

The proposed research paper for the Master's thesis of researcher/Nourhan Ahmed Mahmoud, teaching assistant in the Multimedia Department, was presented.

Student Activities Subscription Code

The Supreme Council of Universities' vision for addressing the phenomenon of street children through universities

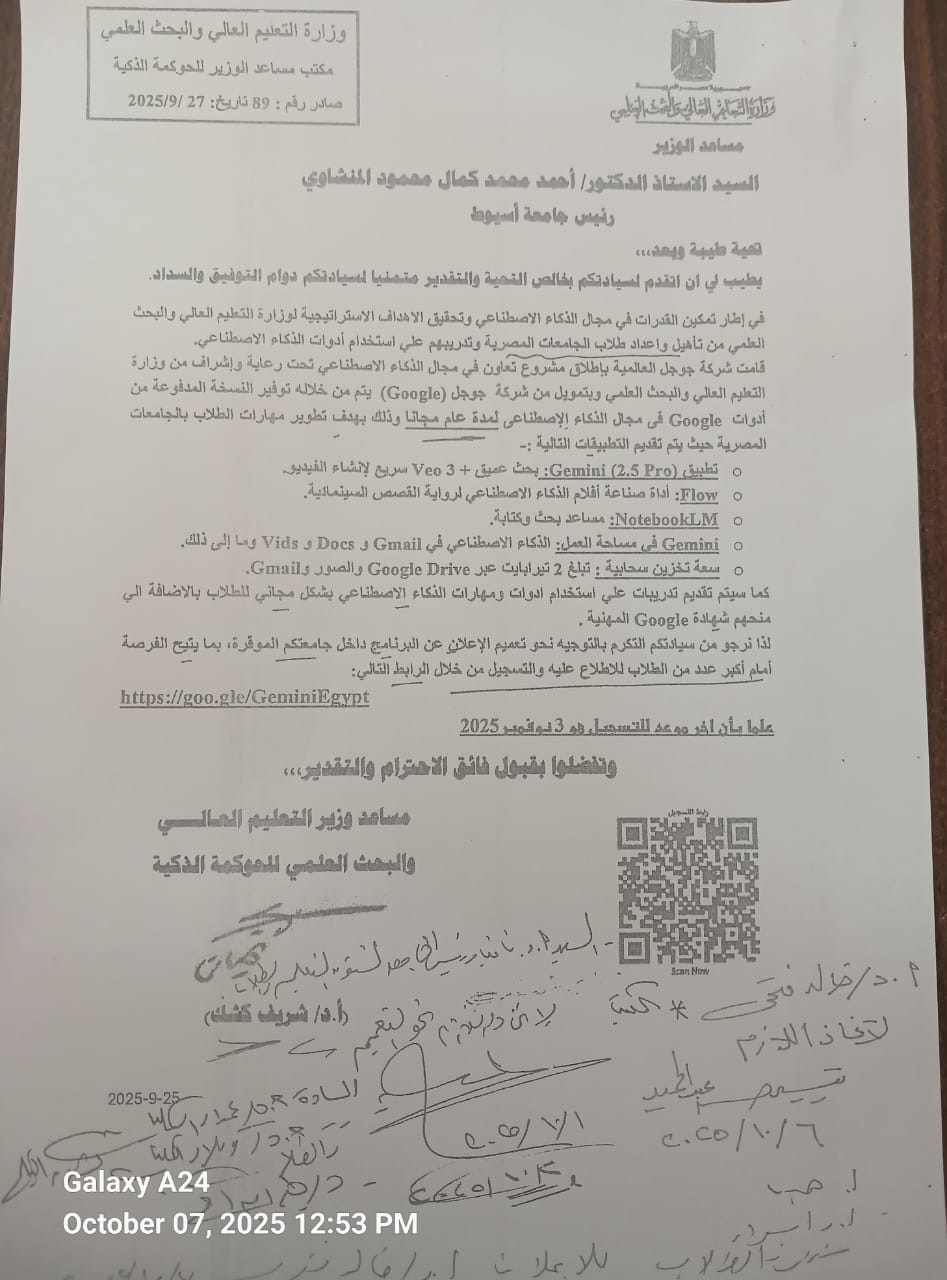

A collaborative project in the field of artificial intelligence under the auspices and supervision of the Ministry of Higher Education and Scientific Research

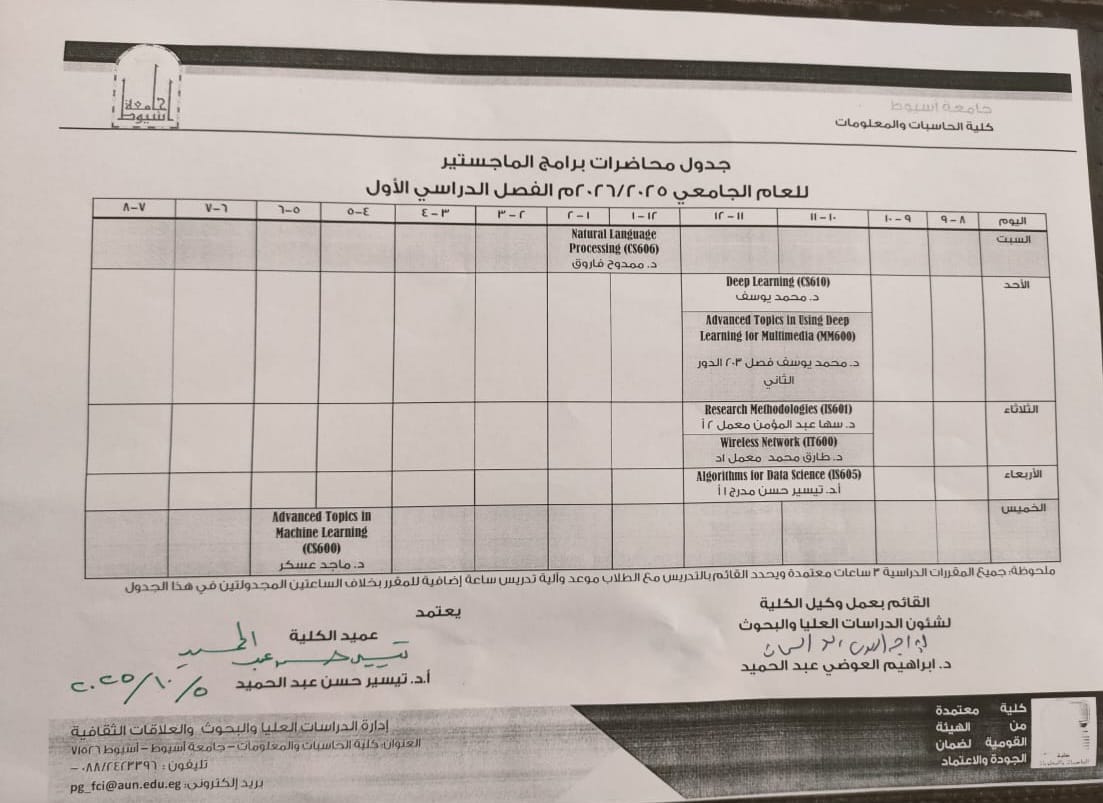

Master's Program Lecture Schedule for the Academic Year 2025/2026, First Semester

news category

Postgraduates