An invitation to participate in the annual scientific conference organized by the African Union Executive Commission for Scientific and Technical Research

Announcement regarding the implementation of training programs for the human resources development project in the health and education sectors

Announcement regarding opening the door to apply for the Dr. Mohamed Rabih Nasser Khalifa Award for Scientific Research in its sixth session for the year 2023

Integrated Health Convoys - Al-Tamsahiyah Village - Al-Qusiya Center - May 2023 AD

تواصل كلية الطب اعمال التوعية الصحية والكشف الطبي المجاني بالقوافل الصحية المتكاملة قرية التمساحية مركز القوصية يوم الجمعه ٢٦ مايو ٢٠٢٣

تحت رعايه

ا.د /احمد المنشاوي- رئيس الجامعه

ا.د/ علاء عطية - عميد الكلية ورئيس مجلس اداره المستشفيات الجامعيه

ا.د/ سعد زكي- وكيل كلية الطب لشؤون خدمة المجتمع وتنمية البيئه والمشرف العام على القوافل الطبيه

د/ عمرو محمد عبد المجيد مشرف مركز القوافل الطبية

استمرارا للدور الرائد الذي تؤديه الكلية على الصعيدين التعليمي والخدمي لمجتمعها المحيط وما تحقق من جهد ملموس بقطاع خدمة المجتمع وتنمية البيئة لخدمة اهالي القري الاكثر احتياجا بمشاركة الاطباء من ٥ اقسام:

الاطباء المشاركين:

ط/ محمد على محمود الغنيمى. الباطنة العامة

ط/ محمد ممدوح مصطفى الأمراض الصدرية

ط/ أية محمد سيد الجلدية والتناسلية

ط/ إسراء سامى عبد الرحمن. الأطفال

ط/ خالد محسن محمد. جراحة العظام

❇️❇️وبمشاركة الطلاب :

شهاب الدين علاء محمود إمتياز

مرفت طلعت محمد

يارا رسلان سلامة

ندى علي عبدالله

تسنيم محمد حسين

الشيماء حسين محمود

منار مجدى أحمد

بهدف تقديم خدمات توعوية طبية وتوقيع الكشف على أهالي القرية ، وصرف الادوية لهم بالمجان من خلال المنفذ الذي توفره الكلية خلال القافلة.

تأتي ضمن سلسلة من القوافل المتكاملة التي تطلقها الكلية بشكل دوري ومستمر ؛ وتشارك بها ضمن مبادرة حياة كريمة التي يرعاها السيد رئيس الجمهورية للقري والنجوع وقد أسفرت عن توقيع الكشف علي ٢٤٣ حالة .

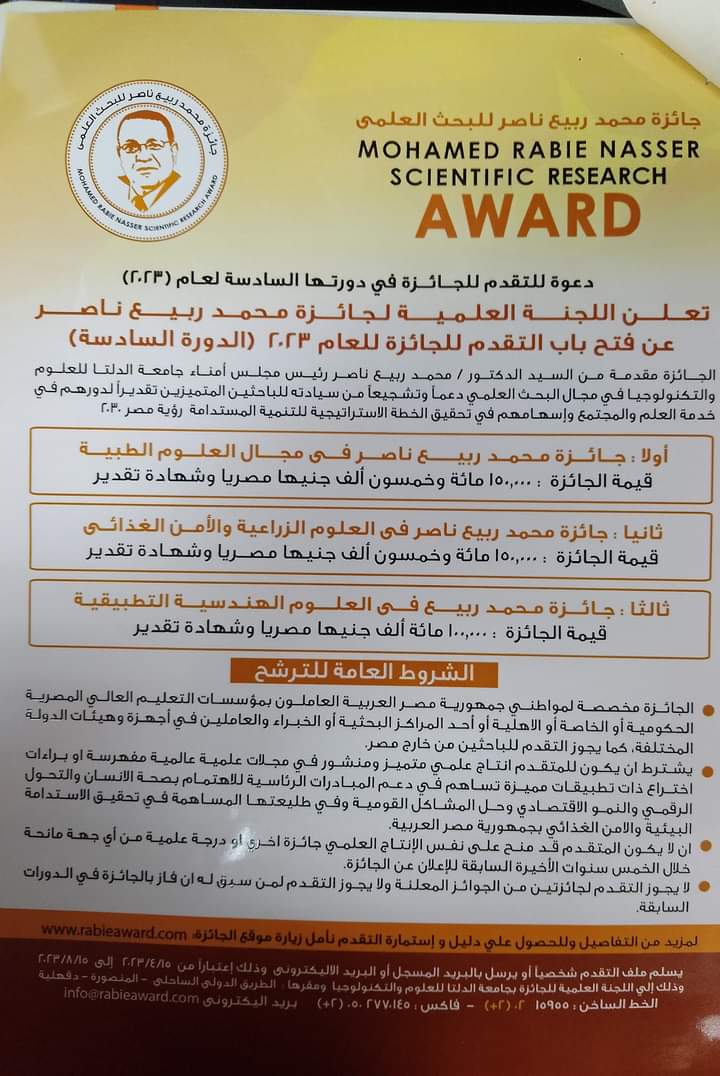

Opening the door to apply for the Mohamed Rabih Nasser Prize for Scientific Research for the year 2023 AD (sixth session)

Announcement regarding opening the door to apply for funding for scientific research for faculty members and their assistants for the fiscal year 2022-2023

Automated tool detection with deep learning for monitoring kinematics and eye hand coordination in microsurgery

In microsurgical procedures, surgeons use micro-instruments under high magnifications to handle delicate tissues. These procedures require highly skilled attentional and motor control for planning and implementing eye-hand coordination strategies. Eye-hand coordination in surgery has mostly been studied in open, laparoscopic, and robot-assisted surgeries, as there are no available tools to perform automatic tool detection in microsurgery. We introduce and investigate a method for simultaneous detection and processing of micro-instruments and gaze during microsurgery. We train and evaluate a convolutional neural network for detecting 17 microsurgical tools with a dataset of 7500 frames from 20 videos of simulated and real surgical procedures. Model evaluations result in mean average precision at the 0.5 threshold of 89.5–91.4% for validation and 69.7–73.2% for testing over partially unseen surgical techniques.

] Image Synthesis with Generative Adversarial Networks to Augment Tool Detection in Microsurgery

For the first time in literature, we investigate the capability of Generative Adversarial Networks (GAN) for synthesizing realistic images of microsurgical procedures and augmenting training data for surgical tool detection. We employ videos from practice and intraoperative neurosurgical procedures to train and evaluate two recent GAN models that have shown promise in high-resolution image generation: StyleGAN2 with Adaptive Discriminator Augmentation and StyleGAN2 with Differential Augmentation. Models were trained with limited data for both conditional and unconditional image generation, where the conditional models generated images with and without surgical tools. Our results show that the unconditional models achieved FID scores between 6 and 25 units lower than the conditional models for the two practice datasets. The best performance (FID= 42.16 and 25.17) was achieved in the Go-around practice task and was comparable to the previous benchmark performance of StyleGAN2 with Differential Augmentation. Experts’ visual inspection showed that while synthetic images had faults that exposed their true origin to the human eye, a sizable portion of them included identifiable surgical instruments. Experiments with object detection showed that augmenting the training data with synthetic microsurgical data improved the mean average precision for detecting tool tips in practice microsurgery datasets by 3%. Future work will include improving the quality of image synthesis and investigating key visual cues in expert assessment of surgical scenes for applications in robust surgical tool detection, bimanual skill evaluation

Optimal spectral bands for instrument detection in microscope-assisted surgery

Optic image-guidance systems enable minimally invasive (MIS) approaches in surgery. However, available MIS-techniques limits both ergonomics and field of view (FoV), which can be detrimental for anatomical awareness and safe manipulation with tissues. Contemporary navigation techniques (i.e. neuronavigation) support spatial awareness during surgery. However, these techniques require time-consuming instrumentation and lack real-time precision needed in soft-tissue surgery. In this work, we utilize operative microscopes FoV as an unobtrusive source to support MIS-navigation with micro-instrument tracking. The FoV instrument tracking has been investigated in laparoscopy, however, high magnification, selection of instruments and bimanually variant characteristics of microneurosurgery make the current computational approaches challenging to adopt. In this work, we investigate potentials of spectral

Do you have any questions?

Do you have any questions?